The Rise of Macromanagement (of Agents)

History

Not so long ago, when ChatGPT came to be, people realized we could empower devs to make surgical changes very quickly. It started with copy-pasting from the web UI. Cursor quickly arrived and offered the best in class AI-Human collaboration UX. You could call agents from the sidebar and review the diff in the UI directly.

It was fine for small, surgical changes, but the human would often have to be the one thinking about the side-effects of the changes. Making a schema change in the backend meant prompting the agent to modify the models.py file, then asking it to update the serializer, then updating the views. The agent was quick, but often missed the bigger picture of each change.

Not only that, but contexts filled up quickly. Early models could fit < 100 000 tokens, which wasn't enough to work on large, complex codebases. And if you needed to make multiple related changes, you'd quickly run out of context window, meaning you'd need to start a new conversation and had to re-explain the context, what you were doing and current progress. It still saved time, but the incredibly efficiency gains weren't quite as magical as advertised.

We started seeing an uptick in popularity around Spec-Driven-Development. With proper specs, you had less explaining to do. And because the spec specified extra information about the stack, agents were aware that you were, say, in a Django codebase, and likely needed to update models, serializers and views. Through this, there was less hand-holding of the model needed. You didn't need to explain your whole stack in each prompt, as it came for free to the agent by reading the spec.

This made changes much more consistent and "bigger-picture-aware". With the agent knowing you were expecting to use Websockets to move X data around after processing, it could decide on a schema that was websocket-friendly when normalizing the processing output. And you didn't even need to tell it!!

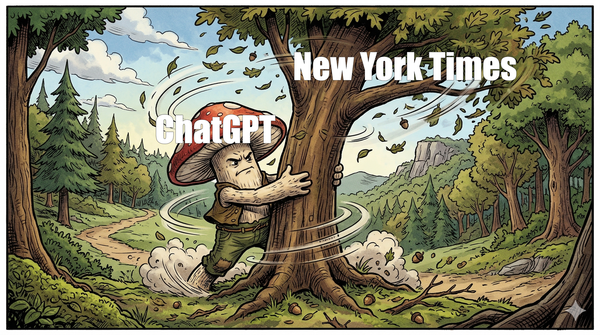

Unfortunately, this was still very surgical. People were making changes prompt-by-prompt, reviewing the diff, because relatively small context windows and models weren't quite powerful enough to feel like good devs you can trust blindly. It mostly felt like having an intern on cocaine. You really have to micro-manage to get anywhere.

But this changed towards the end of 2025.

Where we're at today

A combination of "smarter" models with ginormous context windows and better agent harnesses allowed agents to work on larger, more complex code bases in ways that truly started feeling like asking a colleague to help with a code change.

You could one-shot simple apps that would take hours to days for humans to code. It was huge. This is pretty much where the mainstream side of the story ends, but we can keep going.

By combining spec-driven development and these new model capabilities, it became possible to work collaboratively with models and one-shot bigger and bigger changes. I'd find myself prompting a large, multi-component change like "Set up the scaffolding for my backend, frontend, reverse proxy, etc...". The model would know from the specs that I want docker-compose, Django, React, NGINX and create a "hello-world" version of the whole app with little to no issues. This might be a greenfield-boilerplate example, but the same applies later in the development process.

What's Next?

This is a pretty fun spot to be in. But we can go further. We already have a spec built and we trust our agent to make relatively large changes. Humans are still needed because minor deviations and errors accumulate. The modus operandi looks like this:

- The agent looks at the spec, decides what to work on next. Implements mostly correctly, but introduces a small deviation from the spec.

- When eventually coming back around that part of the code for another feature, the agent sees the deviation, decides fixing is not in scope of the current work. It adds a backwards-compatibility layer or some weird hacky workaround.

- This behavior compounds over a couple of iterations, and eventually the complexity is such that the model can't make any meaningful progress - it's stuck in workaround-hell. Code starts getting bloated and the clean interfaces defined in the spec don't mean anything anymore.

At this point, a human has to intervene and clean things up, which is notoriously when most vibe-coded apps starts being abandoned. But there are ways to prevent this failure mode.

This blog post from Anthropic lays out a couple of ways to fix this, and it all boils down to ensuring a consistent alignment. You can use adversarial generation to have your code evaluated frequently for its alignment with the specs. If it deviates, fix early. It's like trying to build a tall Jenga tower - start stacking blocks, but once in a while, make sure the lower levels are stacked neatly to make sure the tower has good foundations to grow on.

I've personally experimented a lot with this method, and it works wonders. I'll ask Claude Code to spawn a subagent to review the current git diff against the current task and the specs. The review comes back with potential bugs, code smells, and deviations from the spec. From there, the agent can automatically fix bugs and code smells. Deviations can also be fixed automatically, but they present an interesting opportunity: they sometimes surface underspecced/misspecced designs. If the spec says service A needs to send data to service B through a mechanism that doesn't make sense in practice (for example because of networking constraints that wasn't thought of during the spec design), the spec might need to be adjusted.

In this model, human intervention shifts from reviewing diff by diff to making design, scope and alignment decisions. It requires good design acumen - what does it mean to change the spec in such and such ways, what will be the side effects, what constraints might it violate, is this still what the users need, is this still what whomever is paying for this wants, etc...

You go from micro-managing the agent to macro-managing. You are not in charge of each individual diffs, you're in charge of making sure that you have a clear direction and strategy, and let the agent execute and challenge this strategy.

Going forward, I think people with good systems thinking and wide knowledge of software development best practices will be at a great advantage. We don't HAVE to micro-manage the implementation of features. All we need to come up is with a plan, and make sure to keep challenging this plan in the face of developments. It's sort of moving the agile methodology to the waterfall method -> You need a full spec and design, but reevaluate it in the face of what comes up during implementation frequently.