The 2-Tiered Web

According to TechCrunch, bot traffic across the web will exceed human traffic as early as 2027. Most people haven't noticed, but this is creating an enormous shift in how the web sustains itself.

Humans, websites and advertisers have had a symbiotic relationship for decades. Humans view websites, websites subsidize operational costs through ads. All good. But with the advent of AI bots, this equilibrium is breaking. Bots don't click on ads. They extract value and move on.

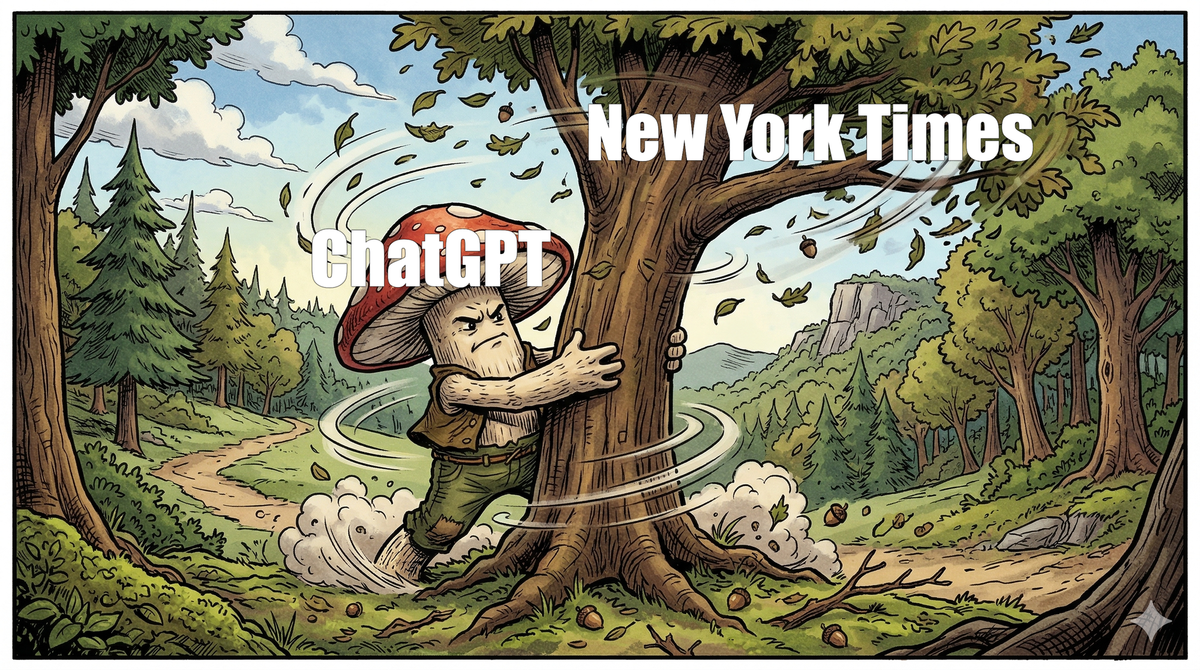

This has already thrown the ecosystem into crisis. The New York Times is suing OpenAI. Stack Overflow watched its traffic plummet after AI coding tools, trained on its own data, made the site irrelevant. Organizations are realizing that AI is siphoning their data without giving back. And AI providers are realizing they're going to have to pay for content one way or another - through licensing deals or through lawsuits.

But we're starting to see emergent business models that could bring things back to homeostasis. Cloudflare - which sits in front of roughly 20% of all websites - is building tools to let site owners detect AI bots and set prices for access. Companies like Troveo and Calliope let individual creators license content directly to AI labs. Reddit is backing Really Simple Licensing (RSL), modeled on music industry clearinghouses like ASCAP, to standardize how AI developers license and compensate publishers. Meanwhile, Curiosity Stream - a small documentary streaming service - now expects its AI licensing revenue to surpass its subscription revenue by 2027.

So my impression is that we're heading toward a web with two distinct layers. Human traffic will hopefully remain unchanged - you'll still browse, read, and watch as you always have. But underneath, an invisible layer will emerge where bots and websites exchange money and content through formalized data contracts, with clear pricing and usage policies (pay-once and pay-per-use, for example). The model will vary by how time-sensitive and unique the content is.

This even extends beyond content. Perplexity AI recently launched shopping agents that browse and buy on Amazon on behalf of users - and Amazon sued to stop them. The two-tier web isn't just about articles and videos. It's about every transaction and interaction that bots will perform on the human web.

Mycelium would probably be a good analogy for where this is heading. This bot layer could become an invisible, complex network of value exchange that sustains the web rather than draining it. How lovely would that be?